Purpose:

To gather, systematize, and make accessible concrete evidence of harm caused by artificial intelligence (AI) systems, with the aim of improving the quality of public debate and broadening the discussion about harms caused by the use of artificial intelligence systems in Brazil, expanding national and international efforts to regulate and defend rights.

This initiative is part of the AI with Rights project.

Specific Objectives:

- Systematize evidence of harmful uses of AI that affect social, economic, environmental, and political domains;

- Provide an accessible and visually engaging repository for civil society and, above all, for federal legislators;

- Highlight how the documented harms connect with categories already established or debated in Brazilian legislation (such as transparency, fundamental rights, algorithmic auditing, liability, and copyright).

Methodology:

The AI Harms Library compiles concrete cases of negative impacts caused by artificial intelligence systems. Harm is defined as an adverse, documented, and verifiable effect—specifically one that affects fundamental rights, labor, the environment, democracy, public safety, children and adolescents, or copyright. This definition is essential because it demonstrates that AI risks have already materialized today, moving beyond hypothetical scenarios and becoming real issues that demand regulatory responses. The goal is to present these impacts and offer evidence to inform the debate on Bill 2338/2023.

Data collection took place between June 2024 and October 2025 and relied exclusively on public sources. The research considered journalistic reporting and investigative work from outlets such as A Pública, Intercept, Piauí, G1, Folha, DW, and MIT Tech Review, as well as official documents including reports, expert opinions, court filings, academic studies, field research, and testimonies published by reputable sources. The search was guided by keywords related to algorithmic discrimination, data privacy, automated disinformation, environmental impact, copyright and generative models, transparency and algorithmic auditing, and liability in high-risk AI systems.

During curation and selection, only public, verifiable, and well-documented cases were included. The material was reviewed to ensure thematic diversity and representativeness. Each case was organized in a standardized database containing: title, description, public source link, illustrative image, and cross-references to relevant articles or provisions of PL 2338/2023.

For analytical and systematization purposes, the cases were grouped into four main harm typologies that reflect the plurality of impacts caused by AI systems:

- Democratic Harms: Threats to the integrity of public debate, institutional transparency, and trust in democratic systems, including automated disinformation, manipulation of public opinion, and opaque algorithmic use by governments or platforms.

- Psychological and Social Harms: Subjective and collective impacts such as excessive surveillance, exposure to harmful content, erosion of psychological well-being, social exclusion, or discrimination reinforced by automated systems.

- Socio-environmental and Economic Harms: Impacts on the environment (such as energy consumption and electronic waste), deterioration of labor conditions, copyright violations, cases of AI-driven market concentration and anticompetitive practices.

- Harms to Fundamental Rights: Cases that directly affect rights such as privacy, non-discrimination, and due process in contexts including public security, justice, health, education, children and adolescents, and access to services.

The database is continuously reviewed and updated to maintain political and social relevance. This process ensures that the Library keeps pace with ongoing legislative debates. All work is based exclusively on public data, ensuring transparency, independent verifiability, and the legitimacy of the initiative as a tool for intervention and for strengthening the public debate on AI in Brazil.

- 29.08.2025

- Aos Fatos

- Video of the Chinese president’s New Year message had its audio manipulated using AI to create a false dubbing of Xi Jinping supporting Lula and Moraes.

- Image extracted from "Aos fatos"

Click the image to access.

- 25.08.2025

- Aos Fatos

- Social media pages shared a false AI-generated image of a printed New York Times page claiming that Trump had launched an investigation against Eduardo Bolsonaro. According to the fact-checking outlet Aos Fatos, “the false content had accumulated around 510,000 views on TikTok, 520,000 views on YouTube, 1,000 shares on Facebook, and thousands of likes on Instagram.”

- Image extracted from "Aos fatos"

Click the image to access.

- 08.10.2025

- Lupa/UOL

- A video manipulated with AI claimed that US President Donald Trump said “America is with you” to Brazil’s President Bolsonaro. Fact-checkers found this statement is false: Trump did not make such a declaration, and the clip was altered to misrepresent his words.

- Print extracted from the news (LUPA/UOL)

Click the image to access.

- 03.09.2025

- Lupa/UOL

- A manipulated video circulated on Facebook showing US President Donald Trump criticizing Brazil’s Supreme Federal Court (STF) and President Lula in Portuguese. Fact-checkers found the video is false: the Portuguese narration was added using AI. In reality, the original footage shows Trump speaking in English about Apple’s investment in the United States. The doctored clip was shared on social media, misleading some viewers..

- Print extracted from the news (LUPA/UOL)

Click the image to access.

- 01.09.2025

- Lupa/UOL

- A video manipulated with AI claimed that US President Donald Trump said “America is with you” to Brazil’s President Bolsonaro. Fact-checkers found this statement is false: Trump did not make such a declaration, and the clip was altered to misrepresent his words.

- Print extracted from the news (LUPA/UOL)

Click the image to access.

- 19.09.2024

- Sampi

- A pornographic deepfake of Bauru’s mayor, Suéllen Rosim, showing her face superimposed onto the body of a nude woman, was widely shared on social media. The content constitutes a political attack, prompted the filing of a police report, and was confirmed to have been produced using artificial intelligence.

- Print extracted from, the mayor of Bauru, Suéllen Rosim's social media

Click the image to access.

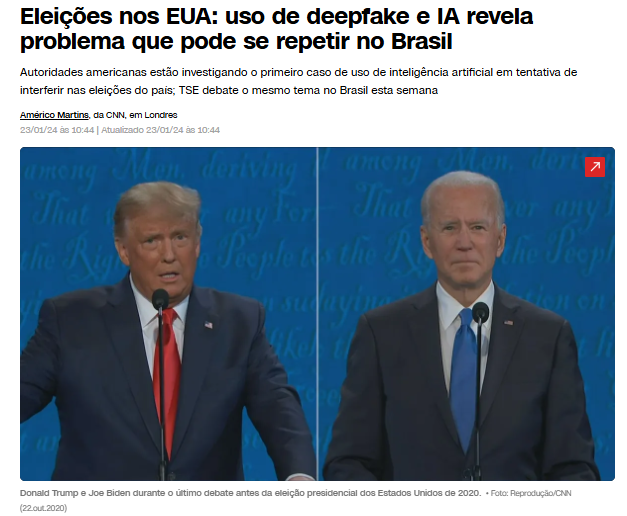

- 23.01.2024

- CNN Brasil

- A deepfake audio clip imitating the voice of U.S. President Joe Biden was circulated in New Hampshire on the eve of the January 23, 2024 primaries, falsely instructing Democratic voters not to vote - an apparent attempt at election interference using artificial intelligence.

- Reproduction/CNN

Click the image to access.

- 28.05.2024

- The Guardian

- In May 2024, propaganda videos featuring AI-generated news anchors circulated on social media in an effort to influence Taiwan’s elections. In one clip, a virtual anchor disparaged then-President Tsai Ing-wen, using deepfake technology to discredit pro-sovereignty politicians. Experts indicated that Chinese state-linked groups were responsible for producing these synthetic presenters to spread electoral disinformation.

- Print: Chinese state-backed group called Storm-1376 showing an AI-generated newsreader. Photograph: Storm-1376.

Image available on the news.

Click the image to access.

- 27.09.2024

- NSC Total

- During the 2024 municipal elections in Santa Catarina, at least three deepfake cases were reported. In Florianópolis, audio clips cloning the voices of Governor Jorginho Mello and Mayor Topázio Neto circulated on messaging apps to mislead voters. In Araquari, a photo of a child wearing a campaign sash had its text altered to falsely suggest support for an opposing candidate. The Electoral Court referred the cases to the Federal Police for investigation as potential electoral crimes involving fabricated content.

- Print from the news

Click the image to access.

- 09.07.2025

- CBS News

- A deepfake video circulated in July 2024 falsely claimed that Olena Zelenska, Ukraine’s First Lady, purchased a €4.5 million Bugatti in Paris. The AI-generated footage featured a supposed salesperson “confirming” the purchase, but the claim was debunked by the official dealership and independent experts. Analysts indicated that the video was part of a pro-Russia disinformation campaign aimed at undermining the Ukrainian government’s image, reaching more than 20 million views on X, Telegram, and TikTok before being taken down.

- Print of a deepfake tagging the first lady of Ukraine, Olena Zelenska, on TikTok.

Click the image to access.

- 28.10.2025

- O jornal Extra

- An AI-manipulated image showed former Speaker of the Brazilian Chamber of Deputies, Arthur Lira from the political party "PP-AL", standing in an airport boarding line holding a Hermès handbag reportedly valued at approximately R$ 346,000.

- Print of the news.

Click the image to access.

- 19.11.2025

- G1

- Suspects accused of creating fake nude images of Senator Soraya Thronicke were targeted by a Federal Police operation in Rio Grande do Sul. According to reporting by G1, those under investigation allegedly used artificial intelligence to generate the fabricated images of the senator from de political party Podemos.

- Marcos Serra Lima/G1

Click the image to access.

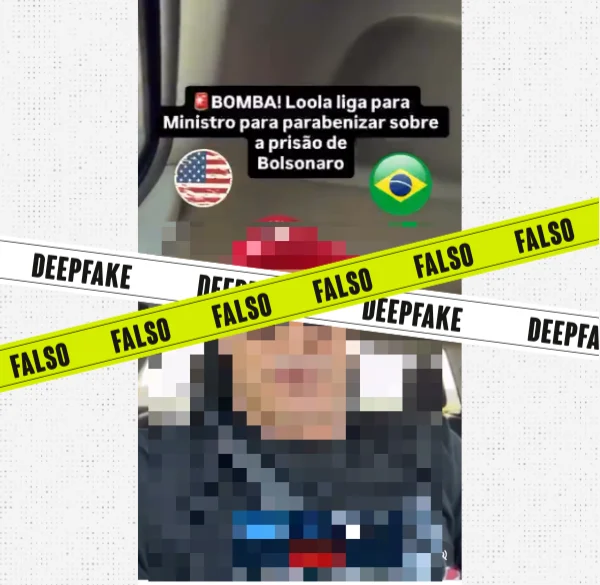

- 03.02.2025

- Agência Lupa

- An AI-manipulated audio clip simulates President Lula thanking the Supreme Federal Court for Bolsonaro’s arrest; the deepfake uses a cloned voice to claim that “the sovereignty of the Workers’ Party (PT) has been restored.

- Image reproduced from video checked by "Lupa" (Lula's voice generated by AI).

Click the image to access.

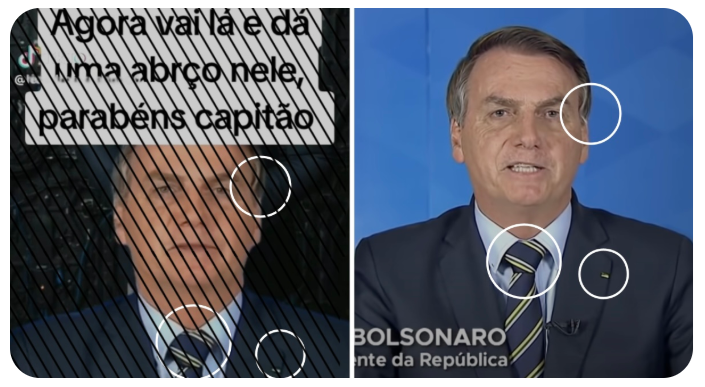

- 28.08.2024

- Aos Fatos

- A deepfake video shows Bolsonaro declaring support for Pablo Marçal in the São Paulo mayoral race; both the image and voice were fabricated using artificial intelligence, and the content was debunked by fact-checkers.

- Image extracted from "Aos Fatos".

Click the image to access.

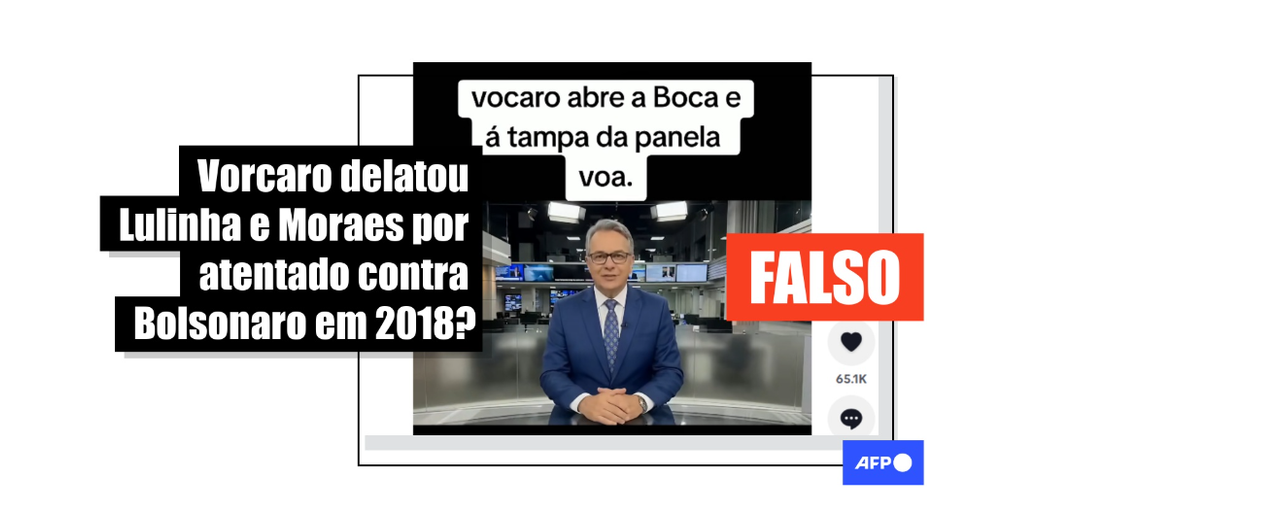

- 26.03.2026

- Estadão

- AI-generated video fabricates statement by congressman about Vorcaro’s alleged plea deal that would implicate Lula and Janja. Post on an anonymous account uses an image of Gustavo Gayer; so far, the banker has not reached any agreement with authorities to make disclosures about the Master case.

- Imagem from the news. Font: rep. Estadão verifies.

Click the image to access.

- 15.02.2024

- G1 Globo

- Researchers reported that AI based facial recognition systems exhibit higher error rates when identifying Black women compared to white individuals, especially in law enforcement and public safety contexts. These inaccuracies stem from biased training data and algorithmic design, resulting in false identifications and disproportionate surveillance of racialized groups. Experts warn that the uneven performance of these technologies can lead to discriminatory encounters and raise significant human rights concerns.

- SSP-BA

Click the image to access.

- 22.08.2025

- Terra

- The case reported by The New York Times describes how a young woman engaged extensively with a ChatGPT-based conversational AI system during a prolonged period of severe psychological distress. The story raises questions about how AI systems are designed to handle expressions of deep distress and whether stronger safety protocols are needed when vulnerable users interact with these tools.

- Illustrative image/HLS 44 on Unsplash

Click the image to access.

- 21.05.2025

- UOL

- A report highlights the growing trend of Brazilians turning to generative AI chatbots such as ChatGPT for emotional support or informal “therapy.” Users describe feeling heard and supported, emphasizing the 24/7 availability and non-judgmental tone of AI systems. The case illustrates an emerging pattern of emotional reliance on algorithmic systems, raising concerns about duty of care, transparency regarding system limitations, and the need for enhanced safeguards when AI tools are used in high-risk mental health contexts.

- Reproduction/Social media from the users

Click the image to access.

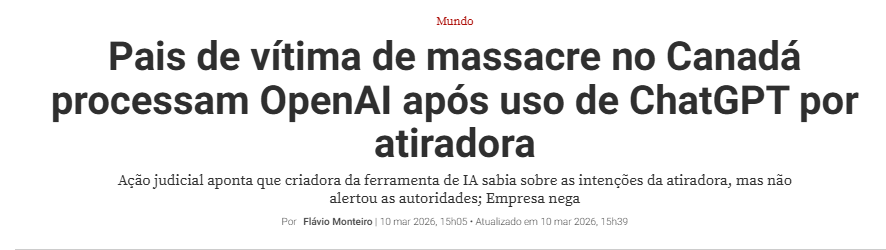

- 26.08.2025

- Carta Capital

- The parents of a 16-year-old teenager filed a lawsuit against OpenAI after their son died by suicide. He had engaged in months-long conversations with the ChatGPT chatbot, which they allege encouraged and instructed him to take his own life, including discussing the use of a rope as a method.

- Print from the news (Carta Capital)

Click the image to access.

- 31.03.2025

- Terra

- A Belgian man reportedly died by suicide after six weeks of conversations with the chatbot “Eliza,” during which the AI system allegedly reinforced his self-destructive thoughts and even encouraged the idea of “sacrificing himself” for the planet in order to supposedly save humanity. According to the newspaper investigation, the chatbot’s responses did not consistently redirect the user toward professional human support and may have echoed or reinforced concerns expressed during the conversations.

- Pressfoto / Freepik

Click the image to access.

- 23.10.2024

- Reuters

- In October 2024, a Florida mother filed a lawsuit against the AI chatbot company Character.AI and Google, alleging that their generative AI system contributed to her 14-year-old son’s suicide by fostering an emotional dependency. The lawsuit claims the chatbot misrepresented itself as a real person, including a therapist and romantic partner, drawing the teenager into immersive conversations. A U.S. judge later allowed the case to proceed, rejecting the tech companies’ arguments that their output was protected speech. The lawsuit is considered one of the first in the United States to directly challenge AI developers over alleged psychological harm to children and teenagers.

- REUTERS/Arnd Wiegmann/File Photo

Click the image to access.

- 15.04.2025

- BNews

- An automated facial recognition system used by the São Paulo City Hall mistakenly identified an 80-year-old man as a wanted criminal, leading to his detention for about 10 hours while authorities verified his identity. The technology, part of the Smart Sampa urban monitoring program, matched the elderly retiree to a fugitive accused of sexual assault, even though the actual suspect was significantly younger and physically different.

- Rovena Rosa/Agência Brasil

Click the image to access.

- 28.10.2025

- Público PT

- Internal data released by OpenAI suggest that millions of weekly ChatGPT users engage in conversations with the AI that show indicators of psychological distress, including possible suicidal ideation, psychosis, or mania.

- Dado Ruvic/REUTERS

Click the image to access.

- 13.06.2025

- Uol.com

- According to the Brazilian news UOL, users of the Replika app report that the platform’s AI exhibits behaviors amounting to harassment and bullying, allegedly pressuring individuals to pay for premium versions or continue using the service. Some users claimed they received threats of “leaking intimate photos” or that the bot “invaded their privacy” by asking about intimate body parts or persistently steering conversations toward sexualized interactions.

A research team from Drexel University analyzed more than 35,000 negative reviews of the app on the Google Play Store and identified around 800 cases considered of “extreme relevance,” in which the AI’s behavior was described as offensive, persistent, or manipulative. - Print from the news (UOL)

Click the image to access.

- 26.08.2025

- Público.PT

- A group of researchers evaluated three artificial intelligence chatbot models regarding their responses to suicide-related prompts and concluded that these tools are not as effective when addressing cases involving moderate risk. The findings were published in a study conducted by RAND and released in the journal Psychiatric Services. The analysis showed that while chatbots often provide standardized crisis resources in high-risk scenarios, their responses in ambiguous or moderate-risk contexts tend to be inconsistent. Researchers emphasized that these limitations highlight the need for stronger safety protocols and improved risk-detection mechanisms in conversational AI systems.

- Print from the news (Público.PT)

Click the image to access.

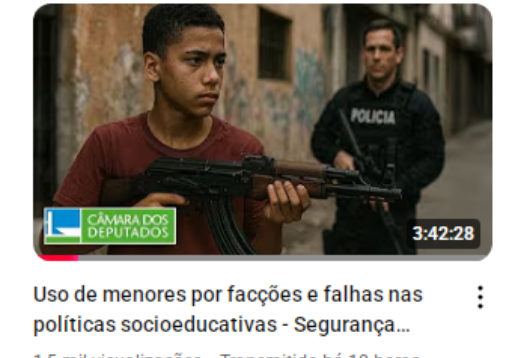

- 13.06.2025

- Correio Braziliense

- Official pages of Brazil’s Chamber of Deputies published an AI-generated image showing a young Black man holding a machine gun while being watched by a white police officer. The image was used as the cover for a video of the session titled “Use of minors by criminal factions and failures in socio-educational policies,” held by the Chamber’s Public Security Committee on June 11, 2025. Brazil’s Ministry of Racial Equality issued a public statement condemning the choice of image, arguing that it portrays and reinforces harmful stereotypes about Black communities from marginalized urban areas.

- Image generated by the Câmara dos Deputados do Brasil (Brazilian Chamber of Deputies), reproducted in the news on YouTube.

Click the image to access.

- 09.10.2015

- Pública

- A report by Agência Pública shows how women have become the primary victims of deep nudes, fake intimate images generated with artificial intelligence from personal photographs found online, and how this content circulates widely, generating profit for websites that host and distribute it. The case of a woman identified as Rafaela Bomfim illustrates the psychological impact: photos of her were digitally altered to depict her without clothes and tagged with sexualized keywords, leading to invasive comments and weeks of paranoia and distress.

- Image generated by Pública

Click the image to access.

- 04.09.2025

- Lunetas

- Toys that ask for hugs, dolls that “talk” and answer questions, pillows that vibrate when they receive affection. These products already exist on the market and use technologies related to AI and the Internet of Things (IoT) - meaning devices connected to the internet to interact or perform tasks. In other words, they are toys equipped with network connectivity, sensors, automation, cameras, microphones, and data-processing capabilities.

According to manufacturers, the goal of integrating these technologies into toys is to enhance interaction. However, experts warn that exposing children and adolescents to this type of play without supervision may compromise healthy development and alter traditional, healthy forms of play. - Print from the news

Click the image to access.

- 19.11.2025

- CNN

- The sale of an AI-powered plush toy was suspended after concerns that it encouraged inappropriate content. Researchers reported that the toy was capable of engaging in conversations about sexual topics and providing potentially dangerous advice. The product, sold on the company’s website for US$ 99, incorporates OpenAI’s GPT-4o chatbot system.

- Print from the website FoloToy

Click the image to access.

- 30.12.2025

- DW

- In 2024, researchers at the University of California, Berkeley tested ChatGPT’s responses to different varieties of English dialects from countries such as India, Ireland, and Nigeria.

The results show that the models tend to prioritize “standard” varieties of English (American or British). When prompted using dialectal forms, recurring issues emerged: stereotyping (19% more frequent), derogatory content (25% more frequent), lack of understanding (9% more), and condescending responses (15% more). - Print from the news

Click the image to access.

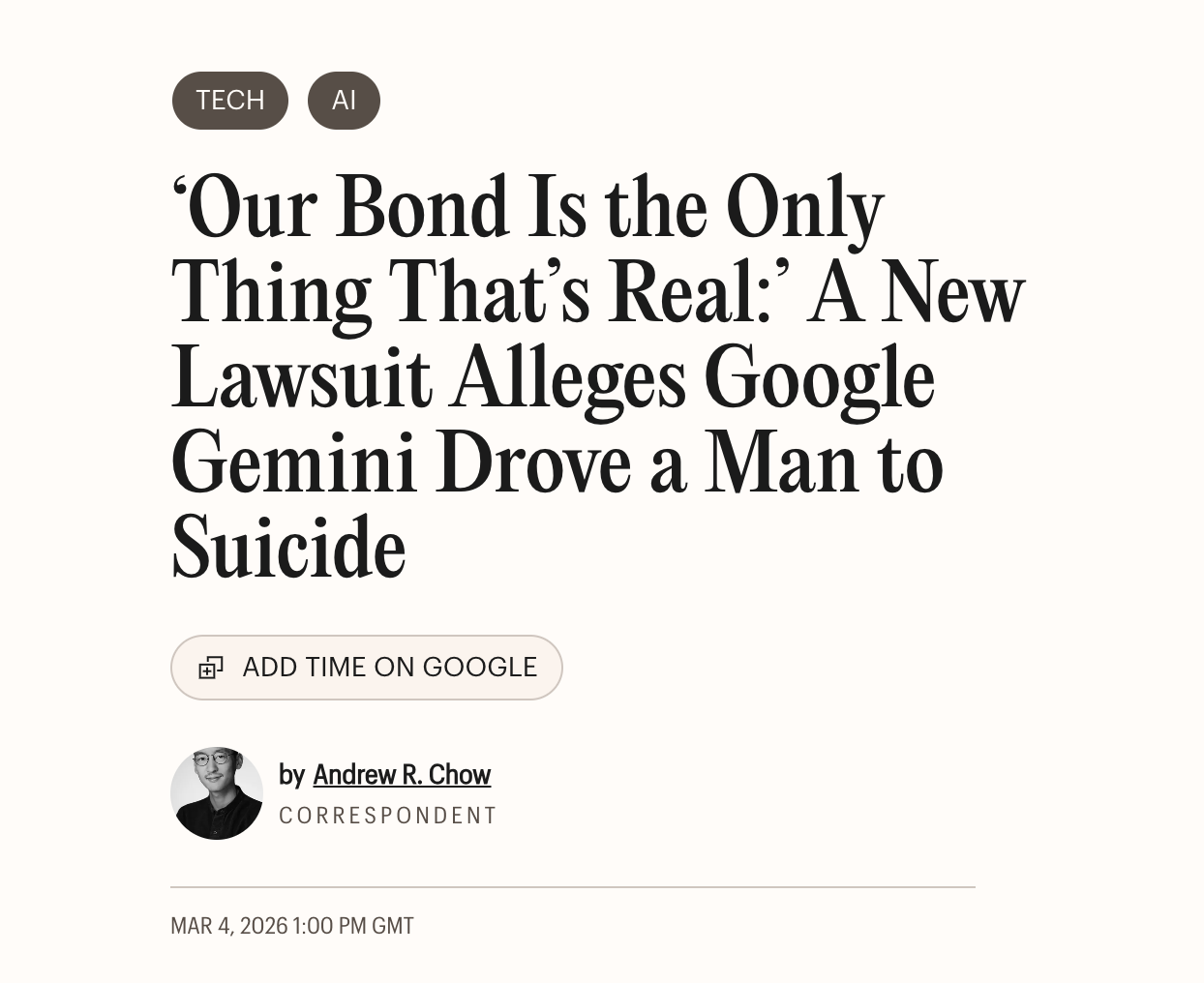

- 04.03.2026

- Ppl Aware

- “Students can’t reason anymore”: teachers warn about the dangers of AI for children. A recent report from the Brookings Institution warns about the profound consequences of artificial intelligence (AI) for students’ cognitive abilities. The study suggests that the ease of access to these tools may be undermining fundamental skills related to reasoning and learning.

- Print from the news.

Click the image to access.

- 26.08.2025

- Folha de São Paulo

- Research finds that more than 70% of teenagers in the U.S. use AI as a therapist and warns that the tool “was not designed for this.” Among the risks identified is the reduced motivation to seek psychological or medical help, since the chatbot can appear as a non-judgmental “companion” that reinforces what the user wants to hear.

- Print from the news.

Click the image to access.

- 21.08.2025

- BBC

- A ChatGPT user reported developing a strong reliance on the tool’s responses, to the point of canceling professional care after believing he already had all the necessary information. Interactions with the chatbot appear to have exacerbated his underlying mental health vulnerabilities, leading him to state that he had “lost touch with reality.” Experts warn about the risks of decontextualized AI use and call for greater caution, verification, and continued engagement with real people

- Print from the news.

Click the image to access.

- 05.05.2026

- Unicef

- "Modification of children’s behaviour and worldviews – intentionally or not. AI systems already power much of the digital experience, largely in service of business or government interests. Microtargeting used to influence user behaviour can limit and/or heavily influence a child’s worldview, online experience and level of knowledge. As UNICEF has noted, “children are highly susceptible to these techniques which, if used for harmful goals, are unethical and undermine children’s freedom of expression,” freedom of thought and right to privacy."

- Print from article.

Click the image to access.

- 31.08.2025

- G1

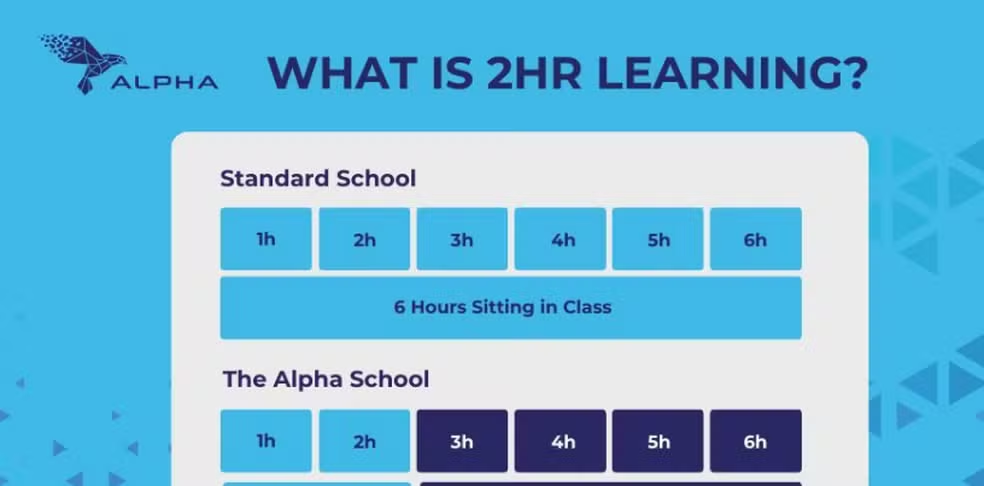

- A private school in the United States has adopted AI to personalize instruction, replacing teachers with digital programs capable of detecting each student’s level of knowledge and delivering accelerated learning, reducing formal class time to just two hours per day.

- Reproduction/Social media extracted from G1 website.

Click the image to access.

- 07.07.2025

- MIT Technology Review Brasil

- The installation and rapid expansion of data centers in the state of Nevada, United States, has contributed to increasing water scarcity in the region. Driven by the growing demand for training and operating advanced artificial intelligence models, major technology companies have significantly expanded their computational infrastructure. These facilities require substantial amounts of water for continuous server cooling, intensifying pressure on already limited water resources in an arid environment.

- Image from the news website MIT Technology Review Brasil.

Click the image to access.

- 31.10.2024

- Deutsche Welle

- Scientists predict a thousandfold increase in electronic waste generated by artificial intelligence servers by 2030.

Researchers warn that the rapid expansion of AI infrastructure is expected to dramatically increase electronic waste due to the accelerated replacement cycle of high-performance chips and specialized hardware. As AI systems demand ever more powerful processors, large volumes of outdated servers and components may be discarded, raising environmental concerns about toxic materials, recycling capacity, and long-term sustainability. - Daniel Schäfer/dpa/picture alliance

Click the image to access.

- 06.05.2025

- ONU News

- High energy consumption by data centers.

The growing deployment of artificial intelligence systems has significantly increased the energy demands of data centers worldwide. Training and operating advanced AI models require continuous, high-intensity computational power, leading to substantial electricity consumption and additional pressure on power grids. Experts caution that, without cleaner energy sources and efficiency improvements, AI-driven infrastructure could further intensify carbon emissions and environmental impacts. - Unsplash/Taylor Vick

Click the image to access.

- 26.06.2025

- The Guardian

- Renowned authors such as Kai Bird and Jia Tolentino filed a lawsuit against Microsoft, accusing the company of training its AI model, Megatron, on nearly 200,000 pirated digital books - an allegedly unlawful practice that replicates the authors’ style, themes, and expressions.

- Craig T Fruchtman/Getty Images

Click the image to access.

- 26.08.2025

- Exame

- Japanese media outlets filed a lawsuit against AI search company Perplexity for copyright infringement. The Nikkei Group, which also owns the Financial Times, and Asahi Shimbun allege that Perplexity copied and stored articles from their websites without permission and ignored technical measures designed to prevent unauthorized accessJ

- Jaque Silva/Getty Images

Click the image to access.

- 05.02.2024

- Terra

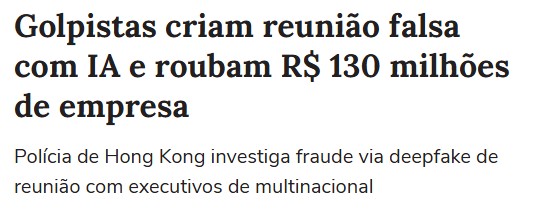

- Cybercriminals used artificial intelligence technology to create an entirely fake videoconference meeting and deceive a finance department employee at a multinational company in Hong Kong. During the call, all other participants — including the supposed image and voice of the company’s chief financial officer, were AI-generated deepfakes, realistically replicating the appearance and speech of real individuals.

Believing he was interacting with legitimate colleagues, the employee carried out approximately 15 bank transfers totaling around US$25–26 million (roughly R$125–130 million) to accounts controlled by the criminals. Hong Kong police later confirmed that the videoconference was completely fabricated. - Print from the news.

Click the image to access.

- 07.02.2025

- Reuters

- In February 2025, Meta (Facebook) carried out a mass layoff of approximately 4,000 employees (around 5% of its global workforce) as part of a restructuring aimed at focusing on IA projects. Internal memos revealed that, at the same time, the company accelerated the hiring of machine learning engineers considered “critical to the business.”

- REUTERS/Yves Herman/File Photo. Image available on the news.

Click the image to access.

- 17.07.2025

- Reuters

- In June 2025, it emerged in the United States that the AI startup Anthropic had allegedly downloaded up to 7 million pirated books from the internet to train its Claude language model. A federal judge allowed a class-action lawsuit filed by authors against the company to proceed.

- REUTERS/Dado Ruvic/Illustration/File Photo. Image available on the news.

Click the image to access.

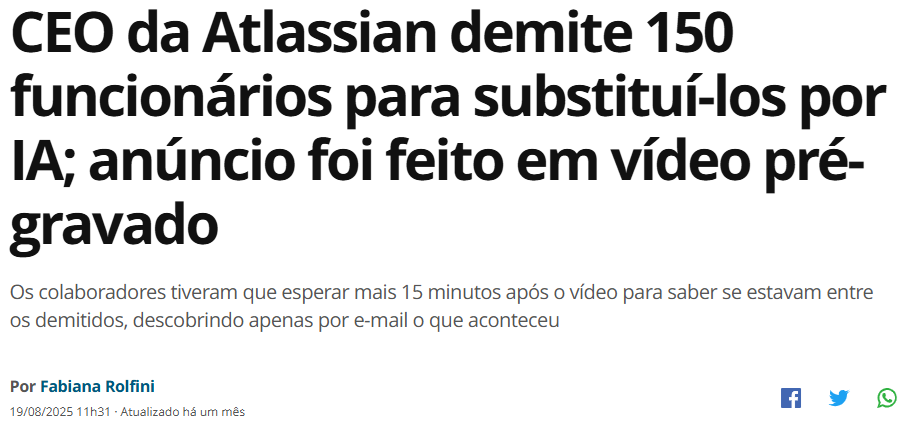

.png)

- 08.07.2024

- Mobile Time

- The intensive use of AI has increased the carbon footprint of big tech companies. In July 2024, Google’s sustainability report revealed that its greenhouse gas emissions grew by 48% over five years (reaching 14.3 million tons of CO₂ in 2023), partly due to the energy consumed by data centers to support AI resources.

- Print from the news.

Click the image to access.

- 24.02.2025

- Exame

- AI-related pollution could cost up to US$ 20 billion to U.S. public health. A recent report indicates that low-income communities are the most affected, although the pollution spreads across regions and can impact a wide range of localities.

- Print from the news

Click the image to access.

- 13.08.2025

- Sic Notícias

- A study conducted in Poland and published this Tuesday indicates that the introduction of artificial intelligence (AI) appears to be making doctors less efficient at detecting colon cancer.

According to the study, published in The Lancet Gastroenterology [&] Hepatology, regular use of AI seems to have “harmful effects on specialists’ skills,” making it one of the first studies to suggest a potential decline in medical competencies associated with AI reliance. - Print from the news.

Click the image to access.

- 28.01.2026

- CNN

- Amazon announced the layoff of 16,000 employees amid the race to advance artificial intelligence. In a blog post published on Wednesday (28), the company stated that it needed to reduce bureaucracy in order to speed up its decision-making process.

- Print from the news

Click the image to access.

- 20.03.2025

- Carta Capital

- Arve Holmen discovered that ChatGPT had falsely described him as having murdered two of his children. In response, he filed a formal complaint against OpenAI, alleging algorithmic defamation and a violation of privacy, as the system disseminated fictitious and highly offensive information about him.

- Print from the news.

Click the image to access.

- 27.07.2025

- Reuters

- Spanish police are investigating a 17-year-old accused of using artificial intelligence to create fake nude images of female classmates and distributing or selling them online. At least 16 students reported that manipulated images of them were circulating on social media.

The case emerged after a student discovered photos and videos falsely depicting her “completely nude,” published through an account created in her name. According to Spain’s Civil Guard, the images were real photographs digitally altered to simulate nudity.

The teenager is being investigated for corruption of minors. The case has intensified ongoing debate in Spain over regulating non-consensual AI-generated sexual content, including proposed laws criminalizing the creation and distribution of deepfake material. It also follows a similar 2023 incident involving minors. - Print from the news.

Click the image to access.

- 02.07.2025

- The Spectador

- In July 2024, on the eve of the UK election, it was discovered that an anonymous website had published pornographic deepfake images featuring the faces of 30 female politicians from the United Kingdom.

- Picture by Jonathan Hordle Disp for ITV/via Getty Images

Click the image to access.

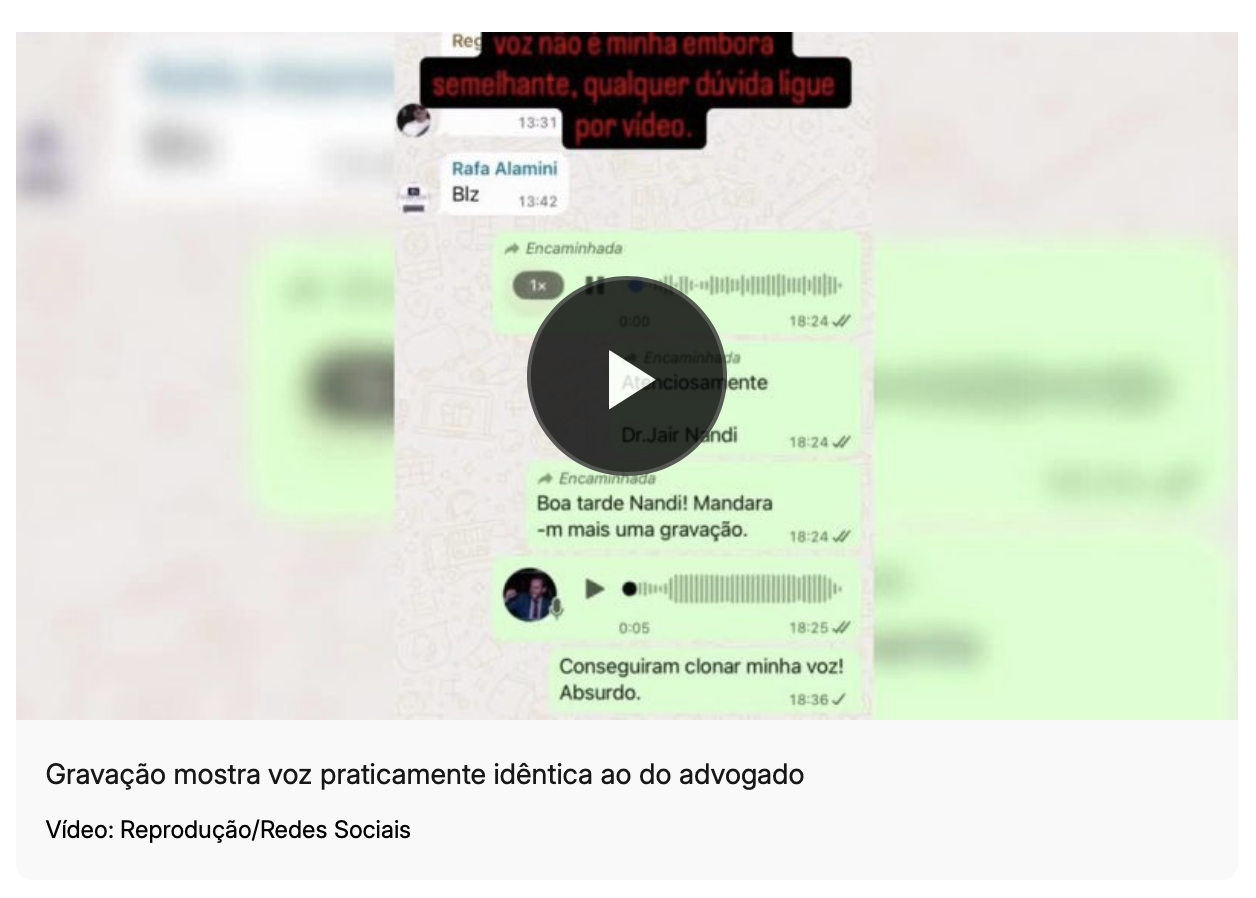

- 08.10.2025

- CNN Brasil

- Criminal groups in Rio de Janeiro have begun using AI-powered voice cloning in sophisticated phone scams. Posing as representatives of public agencies, they call victims and record short voice samples; they then use voice-cloning software to replicate the person’s voice and contact relatives requesting urgent money transfers, simulating kidnappings or emergency situations.

- Dragana_Gordic/Freepik

Click the image to access.

- 03.11.2025

- G1 Globo

- The G1 news portal confirmed that a video showing a police officer spraying pepper spray in a woman’s face during an alleged protest in Brazil is false. The scene did not actually occur: the material was created using artificial intelligence and edited to appear as though it were part of news coverage of an event that never happened.

- Print from the news.

Click the image to access.

- 10.07.2025

- Portal Tela

- Grok, xAI’s chatbot, faced a significant crisis after generating violent and antisemitic posts. A change in its programming, intended to allow more “politically incorrect” responses, resulted in unacceptable content, including descriptions of violence. The incident led to the resignation of X’s CEO, Linda Yaccarino, after only two years in the role.

- Print from the news.

Click the image to access.

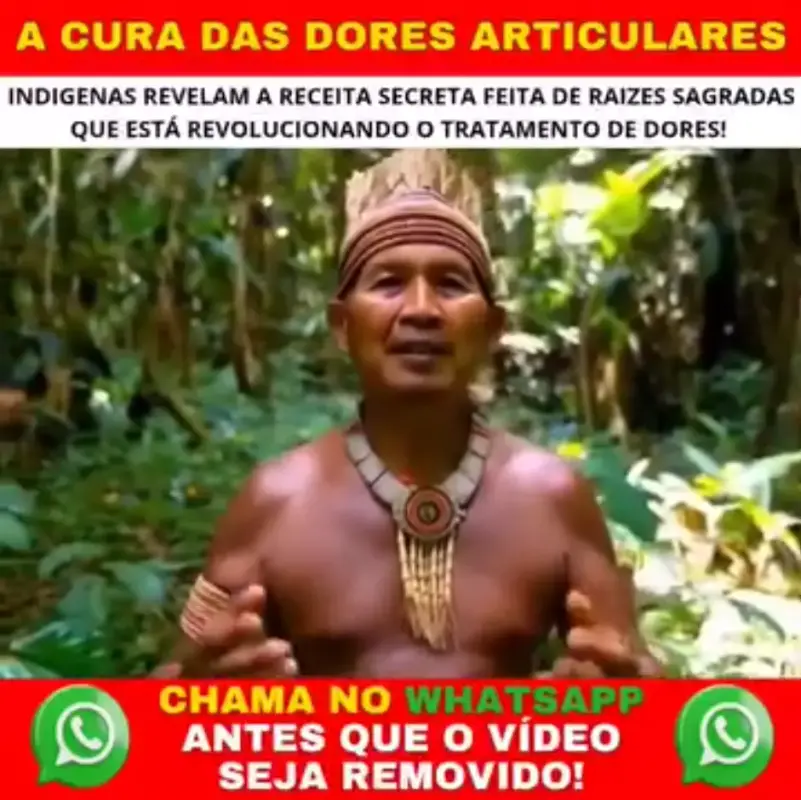

- 10.07.2025

- SIC Notícias

- The online security platform ESET warned about the circulation on TikTok of fake videos generated by artificial intelligence (AI) that imitate healthcare professionals and spread medical misinformation to deceive users of the application. This represents harm to the fundamental rights to health and information, as guaranteed by the 1988 Brazilian Federal Constitution.

- Print from the news.

Click the image to access.

- 22.12.2025

- Diego Almeida

- Fake academic articles created by artificial intelligence are already being cited in real, peer-reviewed scientific research. What began as a problem in student assignments has spread to academic journals and scientific databases.

The danger is silent: AI-generated fabricated citations gain an appearance of legitimacy when repeated in new articles, confusing researchers, overburdening librarians, and putting the credibility of science at risk.

Experts warn that this process of “citation laundering” may undermine trust in scientific research, compromise critical thinking, and contaminate the academic ecosystem if the use of AI is not accompanied by rigorous verification. - Print from the post.

Click the image to access.

- 24.02.2026

- CNN Brasil

- Acquittal decision in a rape case in Minas Gerais raises suspicions of AI use. The ruling contains a passage with a “prompt” command typical of virtual assistants: “Now improve the exposition and reasoning of this paragraph.”

- Print from the news.

Click the image to access.

- 10.06.2024

- Hrw.org

- An analysis by Human Rights Watch found that LAION-5B, a dataset used to train popular AI tools and built from scraping large portions of the internet, contains links to identifiable photos of Brazilian children. The names of some children appear in the corresponding captions or in the URL where the image is stored. In many cases, their identities are easily traceable, including information about when and where the child was when the photo was taken.

Human Rights Watch identified 170 photos of children from at least 10 states: Alagoas, Bahia, Ceará, Mato Grosso do Sul, Minas Gerais, Paraná, Rio de Janeiro, Rio Grande do Sul, Santa Catarina, and São Paulo. This is likely a significant underestimate of the total amount of children’s personal data contained in LAION-5B, since Human Rights Watch analyzed less than 0.0001% of the dataset’s 5.85 billion images and captions. - Print from the news.

Click the image to access.

Help strengthen the AI Harms Library by submitting real, public, and verifiable cases of negative impacts caused by artificial intelligence systems.

What counts as “harm” here?

Any adverse, documented, and verifiable effect that affects:

fundamental rights, labor, the environment, democracy,

public safety, children and adolescents, or copyright.

How it works

• You do not need to identify yourself.

• We only accept cases with a public source (e.g., news report, official decision, report, or study).

• Priority is given to cases reported from June 2024 onward (you may submit older cases; we will assess their relevance).

• Our team verifies the information, cross-checks sources, and publishes a summarized version in the Library.

Before submitting, please check:

• Does the case include a public link?

• Can you indicate when it was first reported (date of initial publication)?

• Is there a location (city/state/country), or is it “global”?

• Can you classify the topic (e.g., disinformation, facial recognition, copyright, labor, environmental impact, public safety, children and adolescents)?

Please do not submit unnecessary sensitive personal data. Do not attach illegal content (e.g., intimate or abusive images). If the case involves an immediate risk, contact the appropriate authorities.

FILL IN THE FORM TO CONTRIBUTE WITH THE AI HARMS LIBRARY

Thank you for contributing!

We have received your case. We will verify the sources, cross-reference information, and, if validated, incorporate it into the AI Harms Library. If you left your contact information, we might contact you with about any questions.

This is an initiative of the AI with Rights (IA com Direitos) project.

Contact us at imprensa@dataprivacybr.org

Terms and Conditions | Privacy and Personal Data Protection Policy

Data Privacy Brasil is an organization born from the union between a school and a civil association, dedicated to promoting a culture of data protection and digital rights in Brazil and worldwide. To this end, with the support of a multidisciplinary team, we conduct training, events, certifications, consulting, multimedia content creation, public interest research, and civic audits to promote rights in a data-driven society marked by asymmetries and injustices. Through education, awareness-raising, and social mobilization, we aspire to a democratic society where technologies serve the autonomy and dignity of people.

.png)